The top 10+1 things we love about Snowflake

Introduction

I have been familiarising myself with Snowflake over the last couple of months and these are my impressions on the top 10+1 features that really make Snowflake stand out compared to other cloud based data warehouse solutions.

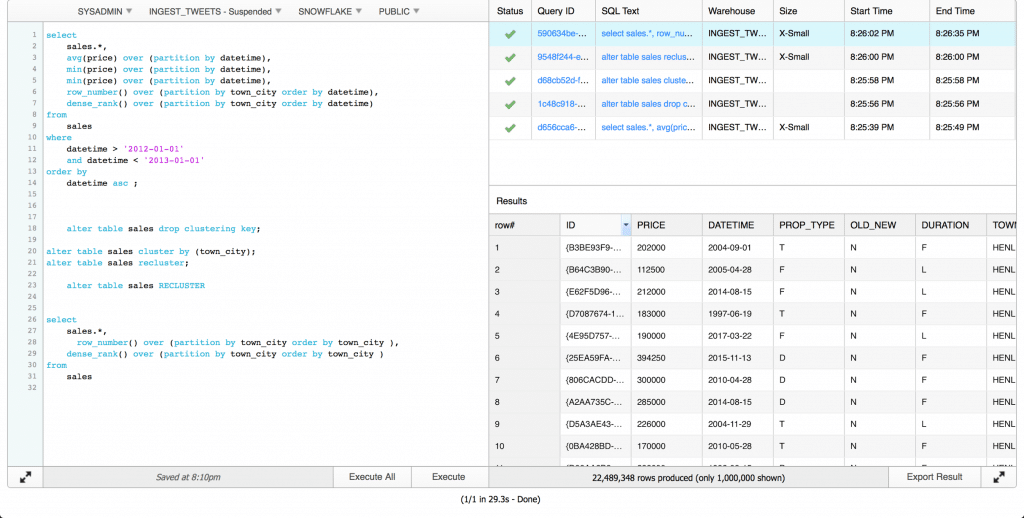

1. Results of queries are stored and can be viewed in query history

The fact that Snowflake stores the history of queries you ran as well as their results is a really convenient feature. The results are kept up to 24 hours or shorter if you run a large number of queries. This is very convenient if you are running the same query more than once because it saves you processing time and money.

2. SQL Worksheets

You can write your queries on the SQL Worksheet in the left side of your Web Interface and check the history of your queries as well as the results of queries on the right side. I find the web interface to be much nicer than most database clients like DBeaver or SQL Developer.

3. Elastic computing

The compute engine (Warehouses) can be turned on and off very quickly. You can also set an auto-suspend to automatically turn-off your warehouse when it’s not being used for a set period of time. This also works very nicely with the per-second billing. Elastic sclaing of resources allows you to run workloads with different characteristics in separate virtual warehouses. For example you might need more resouces for your month end reporting queries compared to daily data imports. Furthermore you can increase the concurrency of Snowflake by adding more warehouses.

4. Excellent documentation

Snowflake overall has excellent documentation. Almost every feature is very well documented and explained. The support forums are very well organized and I expect even more information will be there as time progresses.

5. Minimal administration

There is no installation required (or much preparation) to use Snowflake. There aren’t any concerns whether your hard disk is big enough or your servers network is configured properly. Connections to Snowflake (JDBC, ODBC…) are very simple to establish. The IT distractions are really minimal which allows you to focus on development.

6. Support for very simple Javascript UDFs and UDAFs

In most database systems creating UDFs and UDAFs is not a very pleasant experience. With Snowflake this is a very simple and enjoyable process. You can read our post on how to create your custom UDAFs to see how easy it is.

7. Excellent SQL support

Snowflake supports most DDL and DML defined in the SQL-99 standard as well as parts of the SQL-2003 analytic extensions. We already covered how well window functions are supported on Snowflake. Snowflake also supports the MERGE statement which is not supported by most of it’s competitors.

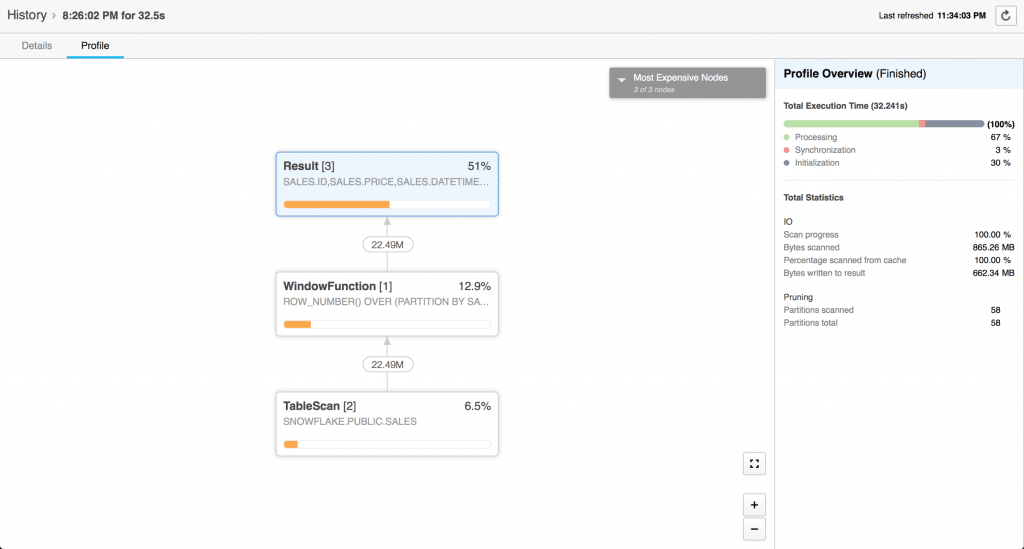

8. Visual execution plans

In Snowflake you can very quickly view the execution plan of a query you ran by investigating the history of a query:

While textual execution plans are a but more detailed (Amazon Redshift), they aren’t as intuitive as these beautiful plans.

9. Clone command and Data Sharing

Snowflake allows you to quickly create a copy of objects such tables, schemas or databases using the CLONE command. The advantage of CLONE command is that it copies the metadata of your object. For example if you clone a database it will keep the user access privileges. Or if you clone a table it will inherit the metadata such as clustering keys (as we showed in previous blog post) and history of column renaming. Furthermore a clone does not contribute to the overall data storage for that object until operations on the clone that modify existing or add new data are performed.

10. Custom connectors (Spark, Python)

The Apache Spark connector for Snowflake allows Spark to push query processing to Snowflake when Snowflake is the data source. Query pushdown is extremely beneficial as it minimizes the effect of network speed on query execution. The connector also offers a richer API than the standard JDBC driver.

While the Python connector doesn’t have any special features, it is extremely convenient for connecting to Snowflake. It can be easily installed using a package manager compared to JDBC or ODBC drivers which you have to download and configure.

10+1. Data Sharing

Normally, B2B data exchange, i.e. exchanging data between enterprises is a painful process. Typically it’s handled via XML and a joint canonical schema, e.g. a schema based on an industry data standard. The data first needs to be converted from a relational database to XML files. Then it is sent via some secure transfer (SFTP etc.) to a central location. From there the receiving party converts it back to a relational format. Sonra’s own ETL tool for XML/JSON can automate that process.

However, Snowflake also has a data Sharing feature that allows data providers to create a share of a database in their account on AWS and grant access to specific objects (tables, databases…) to other accounts. For example other accounts could be different organizations. The main benefit of Data Sharing is that no actual data is copied or transferred between accounts. The data does not take up any storage space in the data consumers account. The only charges to consumers are for compute resources used to query the shared data.

Enjoyed this post? Have a look at the other posts on our blog.

Contact us for Snowflake professional services.

We created the content in partnership with Snowflake.