Scripting in Oracle Data Integrator: Jython, Java BeanShell, Java, Open Tools, ODI API & SDK, ODI Substitution API

Get our e-books Discover the Oracle Data Integrator 11g Repository Data Model and Oracle Data Integrator Snippets and Recipes

It’s been a while since my last post. We had a baby recently. Not so much time for this blogging stuff anymore.

Anyway here is another post on the various scripting options in ODI. It gives a brief overview when to use each and the advantages and disadvantages.

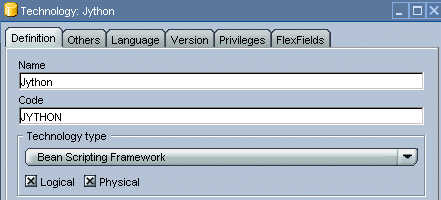

Jython

Jython is an implementation of Python that runs in a Java Virtual Machine (JVM). It includes a compiler that compiles Python source code down to Java bytecode which then runs directly in the JVM. Using Jython you can run Python scripts that interact with Java. This means that you can use any of the Java and Python libraries. This makes Jython extremely powerful as you can reuse any of your existing Java or Python code.

Oracle Data Integrator includes the Jython Interpreter within the execution agent. The version of Jython that is included with ODI 10 is Jython 2.1 and afaik this has (strangely) not changed in ODI 11. However, you can easily upgrade your version of Jython by replacing the jython.jar with a newer version. You can start a Jython console and execute Jython code from the command line by launching jython.bat or jython.sh from the oracledibin folder.

Jython is one of the scripting languages that is supported by the Jakarta Bean Scripting Framework (BSF).

Jython is the prefered option when you need a quick and dirty scripting solution where reusability is of minor importance.

Want to find out more about Jython?

[big_data_promotion] Great Jython cheat sheet by the guys from ODI experts. Covers a lot of the recurring Jython code snippets that you need for ODI. Thanks Cezar and Dev.

The Definitive Guide to Jython: Python for the Java Platform.

Jython Essentials (O’Reilly Scripting)

Java Bean Shell

The Java BeanShell is another BSF language. The BeanShell dynamically interprets Java source code and provides scripting extensibility for your applications. As a result you can just use it like Jython for your scripting purposes. However, in note 423905.1 Oracle recommends to use Jython for customisations that require Java classes.

To be consistent we personally use Jython for all our scripting requirements. As far as I know the scripting steps of most of the Knowledge Modules are exclusively written in Jython (the IKM File to Teradata (TTU) is one of the exceptions). So why do we need the Java BeanShell? The BeanShell is great for manipulating results and interacting with the Substitution API. It is also great for mixing and matching with the other technologies. This is great for conditionally executing code inside the other ODI technologies. It is also very useful for manipulating values returned by the API, e.g. you may have a requirement to replace certain characters returned by a method call to the API.

You can think of the relationship between the BeanShell and the other technologies in terms of ASP or JSP and HTML.

Whenever possible you should encapsulate any complex logic inside classes and methods. This makes code more readable and also reusable.

We can then call the above method where needed.

Have a read of my posts on nesting Java BeanShell code in ODI and part II. In the posts I explain some of that cryptic <% %> <? ?> <@ @> stuff.

Also refer to

Scripting in Java: Languages, Frameworks, and Patterns

Java

Java classes as defined in the example in the previous section can not be called across steps in a knowledge module or procedure. If you want to persist your classes and make your code even more readable and reusable you should compile them into .jar files.

I have explained how to do this in another post on the site.

The big disadvantage of this approach is that you need to recompile your code and restart your agent whenever you make a change to your class.

Open Tools

Tools in Oracle Data Integrator are used to perform common data flow tasks such as putting files to an FTP server or sending e-mail alerts. Similar to the other technologies you can mix and match the tools with the Java BeanShell, SQL and ODI variables. ODI comes with a whole set of pre-defined tools. Generally these are sufficient for the most common data flow tasks and from our experience cover at least 80% of requirements. However, sometimes the Tools at hand don’t offer a specific functionality or have bugs. That’s when you need to write your own Tool using the Open Tools SDK. Tools are written in pure Java and need to be compiled and imported into ODI. So the high degree of reusability comes at a price.

ODI API & SDK

ODI 11G ships with an SDK. I have blogged about this in a previous post.

I can see the following use cases for this.

(1) Automating migration of data flows and process flows from another ETL tool.

(2) Scripting data flows, e.g. let your business analysts perform source to target maps in Excel and then use the SDK to create interfaces from the Excel sheets

(3) Creating interfaces at runtime and on the fly, e.g. I recently had to load data from several hundred Access databases. Not all of these followed the same table structures. For the offending tables interfaces could have been created at runtime.

(4) Automated data extracts, data migration and replication: Based on data dictionary information in a source database script interfaces to generate data extracts etc.

Download ODI SDK code samples.

ODI Substitution API

The ODI substitution API exposes methods to query metadata in the ODI repository. In theory you can connect to the work repository and directly send queries against it. This is not good practice however, as the data model of the repository may change with a new release and as a result queries may not work. The ODI substitution API uses direct calls to the ODI Java methods. These java methods typically return string values. They all belong to the odiRef object instance. Calls to the API do not always return values but depend on the context, e.g. a call to the source column list only makes sense in a knowledge module.