Converting XML/XBRL from SEC EDGAR. Data sharing with Snowflake

In this blog post we use Flexter to convert XML and XBRL in SEC EDGAR submissions to a Snowflake database and analyse the output with SQL.

Flexter is available as an enterprise edition. The enterprise edition can be installed in the cloud or on-premise.

Table of Contents

What is EDGAR?

Companies in the US are required to file certain forms with the Securities and Exchange Commission SEC. Companies make their SEC submissions to EDGAR, the Electronic Data Gathering, Analysis, and Retrieval system. The system processes about 3,000 filings per day, serves up 3,000 terabytes of data to the public annually, and accommodates 40,000 new filers per year on average.

The SEC make the submissions data available to the public. Submissions can be downloaded from the SEC website. Each submission by a company contains one or more documents. These documents are stored in text files with the extension .nc.

Let’s look at some examples to see what is inside the .nc files. We have downloaded an archive of filings for one day.

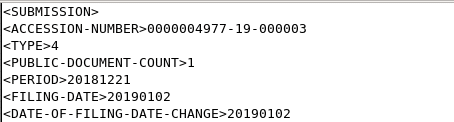

In the first part of the .nc file we can find some general information on the filing, e.g. the unique identifier of the submission (ACCESSION-NUMBER), the company name, the number of documents in the submission etc. This information is delivered in markup language and only contains closing tags for some elements.

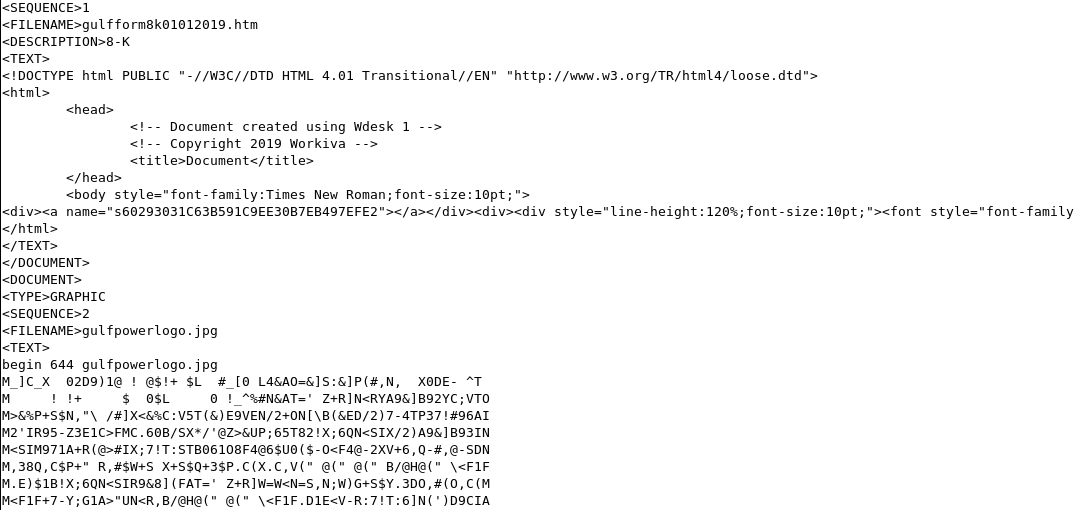

Let’s look at some other document types inside the .nc submission file.

As we can see the first document in this submission is an html file. The second is a jpg file in binary format.

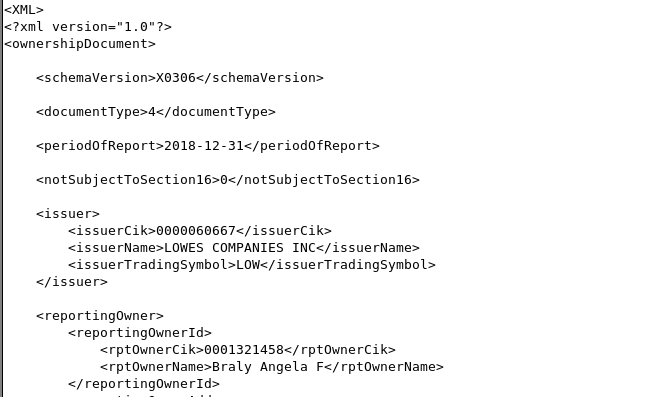

A lot of the documents are in .xml format.

Some of the XMLs are XBRL documents.

Preprocessing the EDGAR submissions

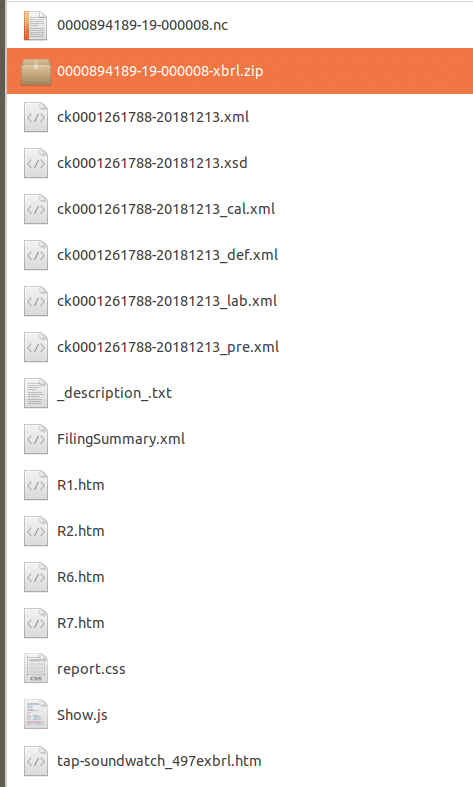

Before we can process the data we need to split various documents contained in an .nc file into their own files. We wrote a script to do this. The results can be seen in the figure below.

In a next step we converted the high level information about the submission into valid XML by adding closing tags to all elements:

Becomes

Converting EDGAR XML with Flexter to Snowflake

Now that our data is in good shape, we convert the XML / XBRL documents with Flexter to a relational format in Snowflake.

With Flexter it’s a four step process (five if you have an XSD) to convert your XML to a database such as Snowflake.

Step 1: Authentication. Once you have signed up for the service you will get a username and password for authentication.

Step 2: We create a connection to the target data store. In this case a Snowflake database. Other supported target databases are Oracle, SQL Server, PostgreSQL, Redshift, MySQL, CSV on S3, download. We add to this list over time.

Step 3: We create a connection to the source data. In this particular case we loaded the XMLs to S3 object storage. Flexter also supports data upload.

Step 4: All that is left now is to run the conversion

Flexter supports web hooks and you can get notified once your conversion has finished.

Analysing and querying the output of Flexter

Let’s have a look at the output of Flexter in more detail.

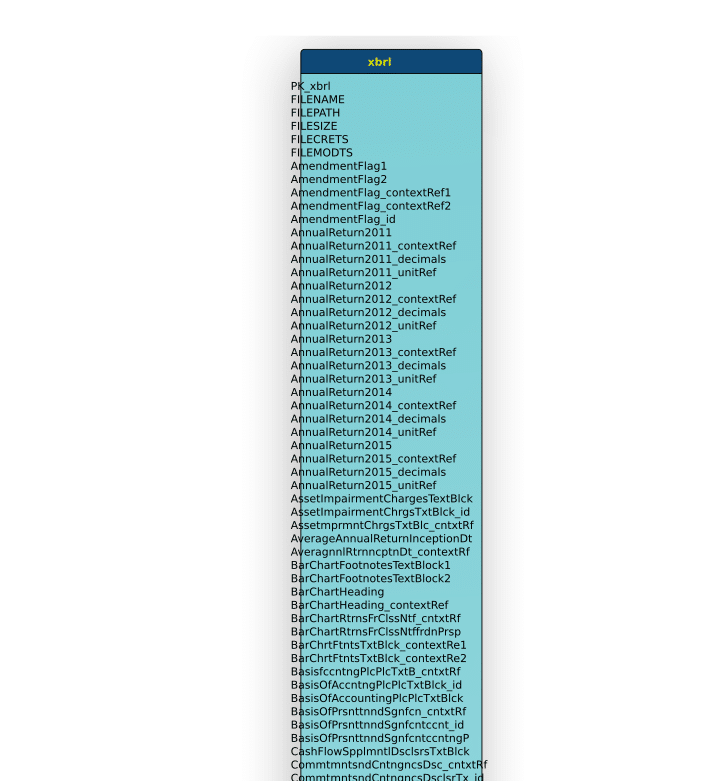

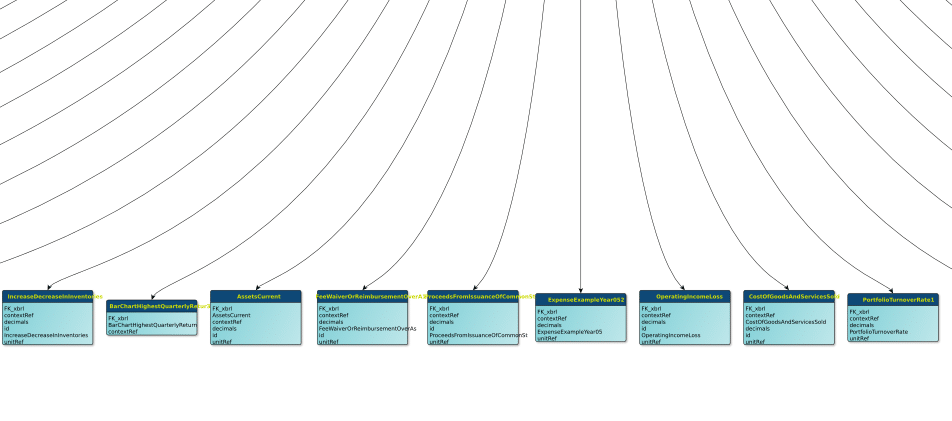

With almost 500 tables, the schema is quite large.

Flexter created a unified schema with multiple roots. This tells us that we are dealing with multiple different schemas and different types of XML files inside the daily archive we downloaded from the SEC website.

Let’s have a look at some of the root tables.

XBRL

One of the root tables is a table named XBRL. It has the highest number of child tables. It is very wide but only has a depth of three levels.

In the figure above, the XBRL table is the long thin line. Underneath are all of its children as little dots.

Let’s zoom in a little.

… and some of the children

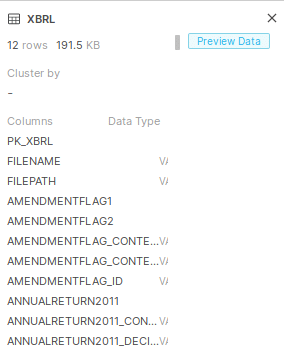

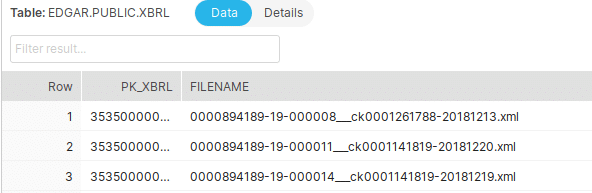

Let’s connect to Snowflake to look at the data in the XBRL table itself.

The table has more than 100 columns but only contains 12 rows of data

Let’s query the table. As we can see Flexter auto-generated globally unique keys and also added some metadata to the target tables such as the name of the submission file we loaded from.

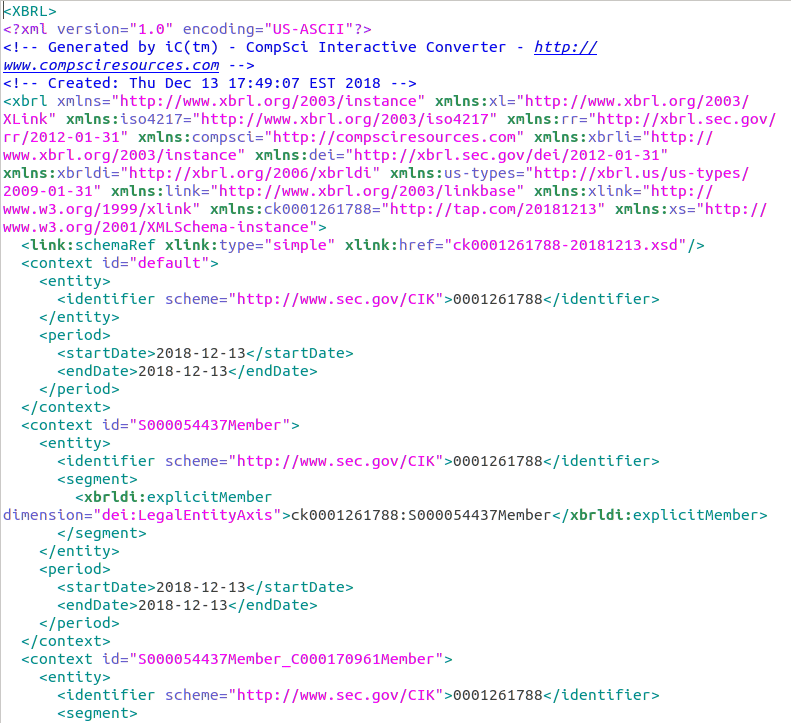

Let’s have a look at the content of the first file in this list 0000894189-19-000008___ck0001261788-20181213.xml

As expected it is an XBRL document. One use of XBRL is to define and exchange financial information, such as a financial statement. XBRL is widely used by regulators of stock exchanges. We extracted raw xml from this XBRL document and processed it with Flexter

Let’s look at some of the other root tables

Submission

If you remember, the Submission is at the beginning of each of the files. It contains descriptive information on the submission itself, e.g. the company name. It is written in a markup language. We converted it to valid XML as a pre-processing step before feeding it to Flexter.

Below is a figure of the relational schema for the XML Submission. It is relatively simple and just made up of a few tables.

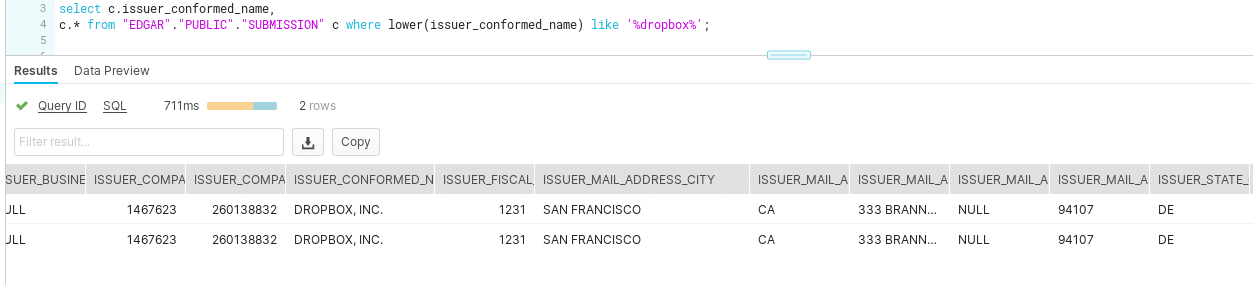

Let’s see if we find submissions for some well known tech companies, e.g. let’s query for Dropbox.

Ownershipdocument

The table ownershipdocument is another interesting root table. It contains ~1.7K records.

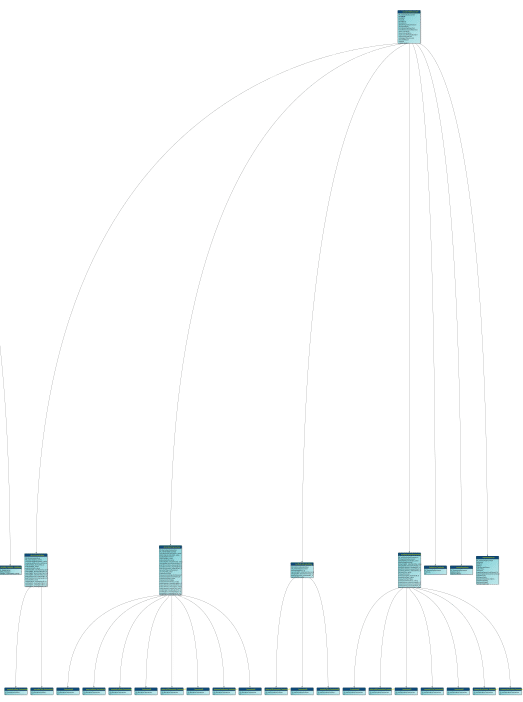

Let’s look at the schema

Querying data across schemas

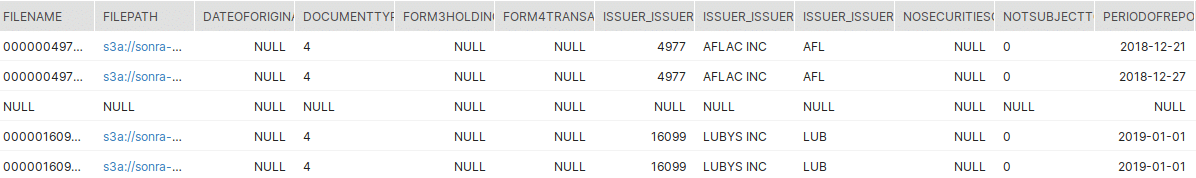

Using the filename metadata from Flexter we can query data across the various schemas, e.g. retrieve information from both the Submission and Ownershipdocument tables.

Here is the query:

|

1 2 3 4 5 |

SELECT * FROM "EDGAR"."PUBLIC"."SUBMISSION" a LEFT JOIN "EDGAR"."PUBLIC"."OWNERSHIPDOCUMENT" b on substr(a.filename,0,20) = substr(b.filename,0,20) ; |

And here the result

Data Sharing in Snowflake

In a last step we will show you how you can share the EDGAR submission data with third parties. We will use a feature in Snowflake called data sharing. Data sharing is a very useful feature as you can securely and easily share data with other users. Third parties don’t require a Snowflake account of their own. Let’s go through the steps of enabling data sharing in Snowflake.

First of all let’s login to Snowflake.

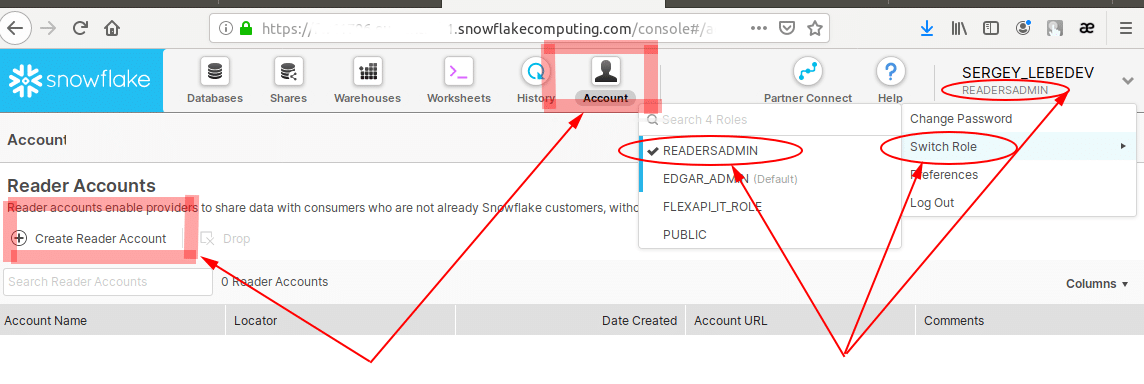

Please, make sure you have READERSADMIN privileges in order to create reader accounts for the data you intend to share, and you switch your role from default to READERSADMIN.

Next you need to add recipients for your data. Click the “Account” tab on the top panel, and then select the radio button “Create Account” .

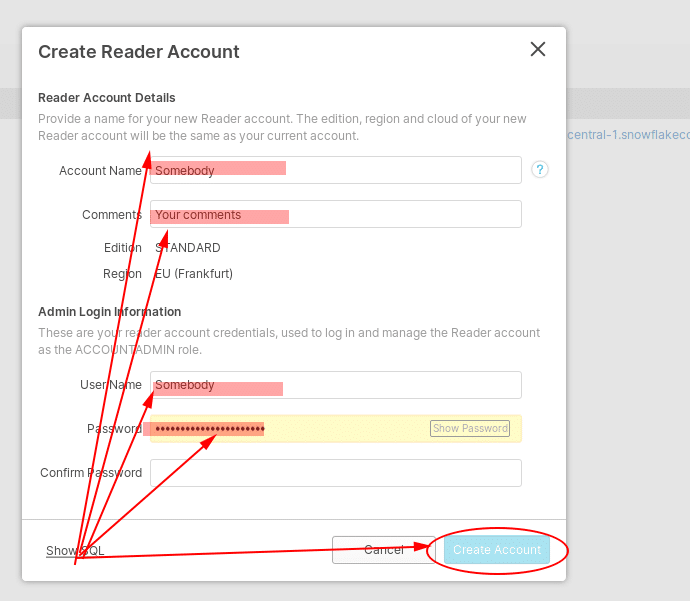

Fill out the mandatory fields “Account name” (your reader account), “User Name” (your reader name) and two matching passwords. Do not forget to confirm your input by clicking “Create Account” button.

Once you have created the new account you can pass on the login details (username, password, URL) to the third party to share the data.

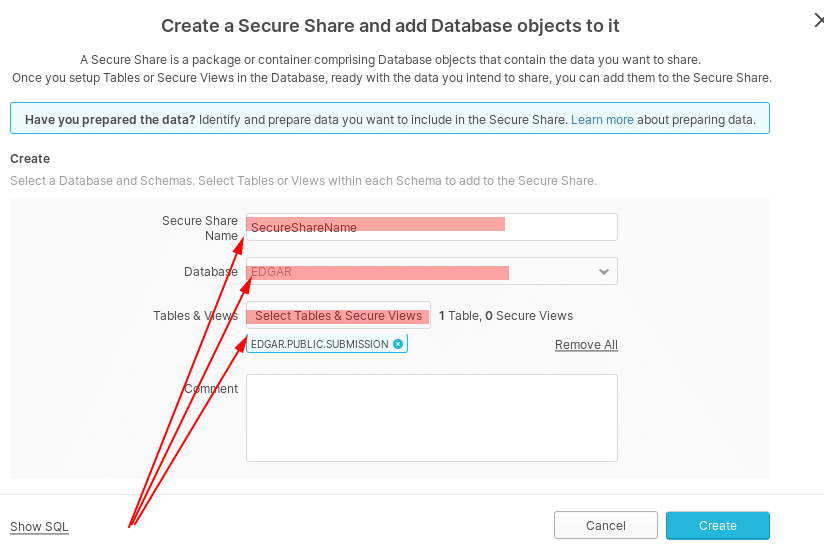

Next we will share some tables and data with the third party. Click on the “Share” tab on the top panel, and then click the radio button “+ Create”.

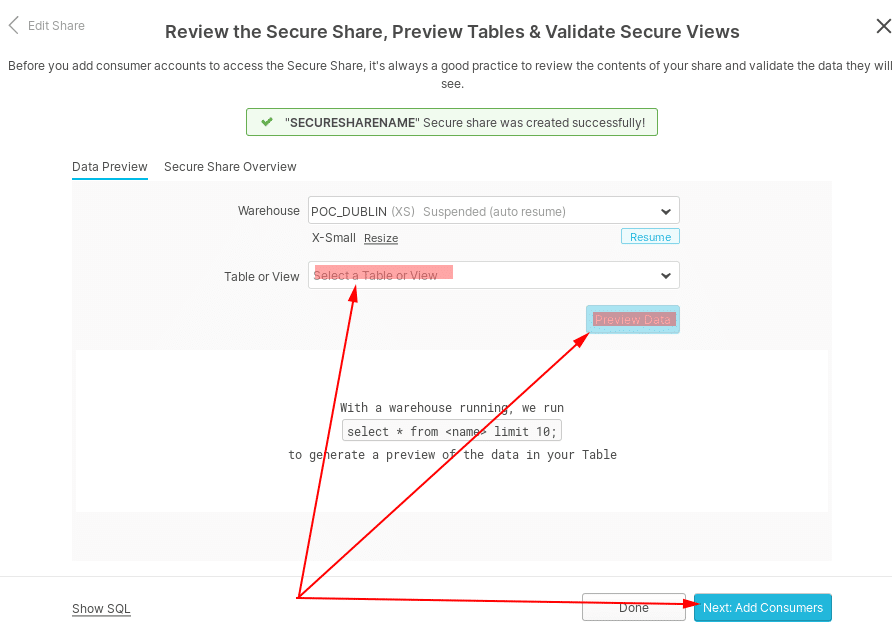

You need to fill out all the mandatory fields, e.g. the the tables and views you would like to share. Confirm your input by clicking the “Create” button.

In a next step click the button “Next Add Consumers”.

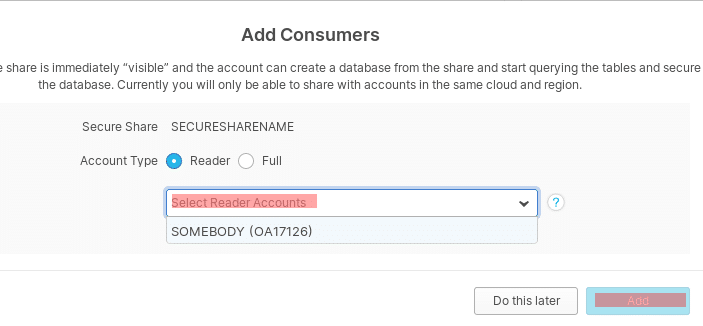

Next assign the Reader accounts to share this particular set of tables or views.

Then, click “Add” and the shared data from Snowflake is ready to be used by your readers.

Logging on as Reader

As the reader/consumer you need to login to the given URL with your credentials. Once logged select the ”Share” button and click the “Create Database From Secure Share” radio button.

Once the database has been created you will be need to create your virtual warehouse.

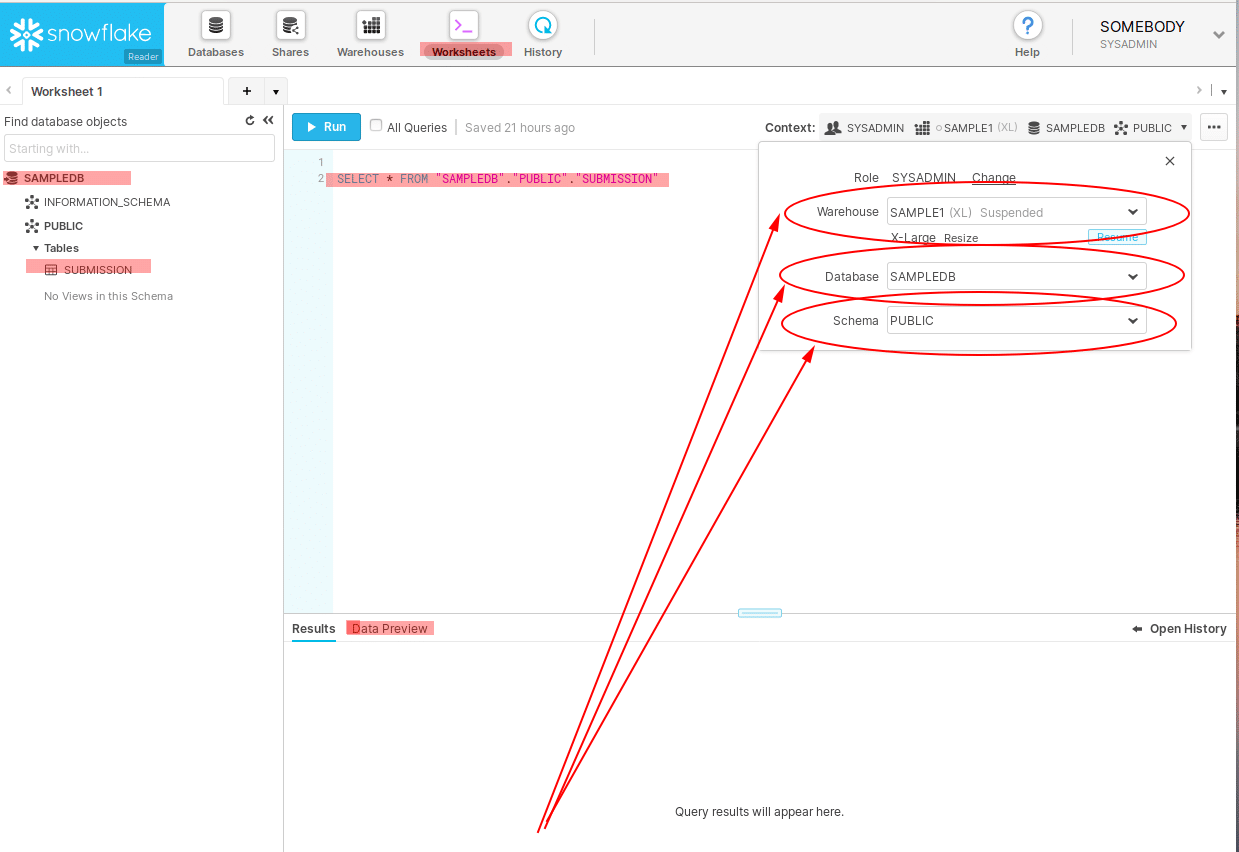

After this you have to refer to the context menu, where you have to choose database, schema and warehouse.

On the database tab, select the database shared with you, then the specific table or view.

In order to access the data you need to complete the query on the tab “Worksheets”, for example:

|

1 |

SELECT * FROM "SAMPLEDB"."PUBLIC"."SUBMISSION"; |

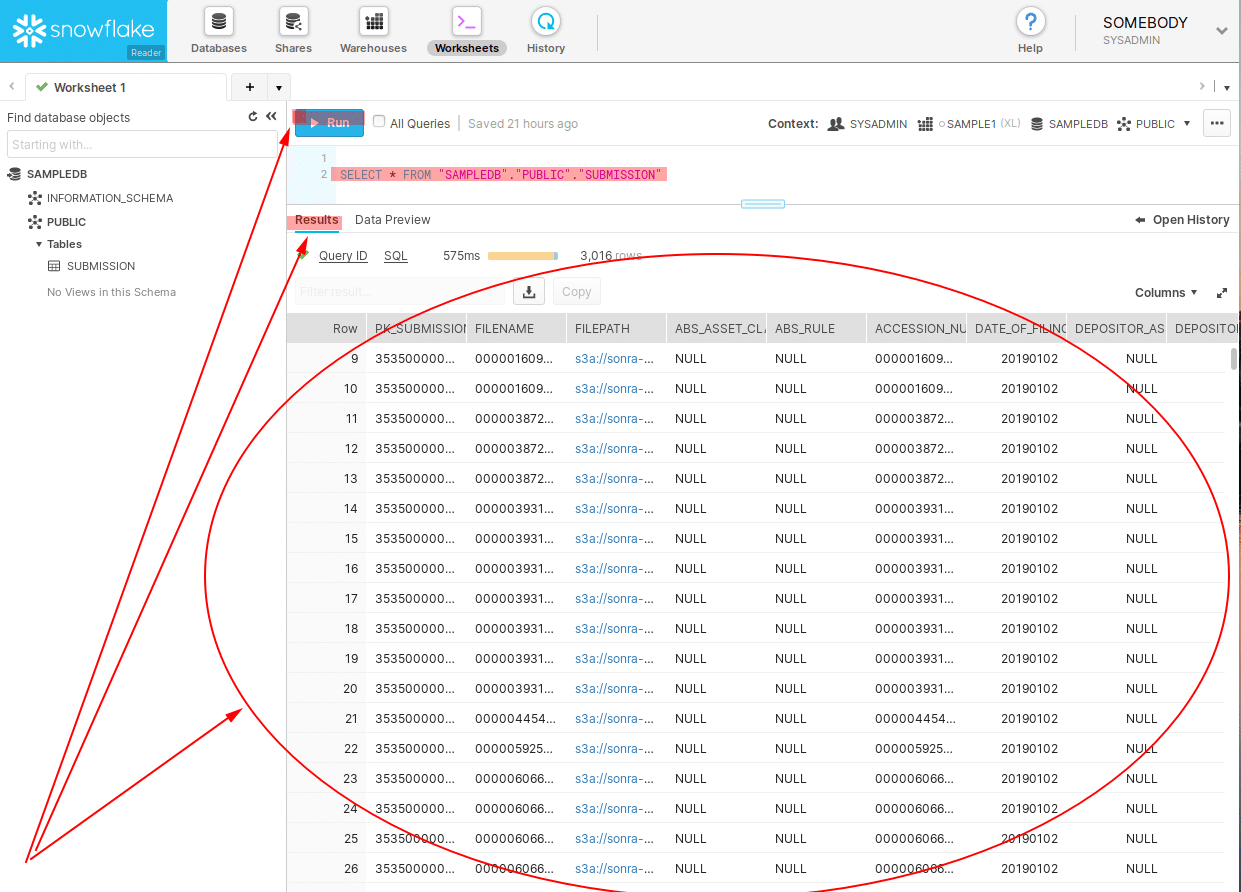

This is what the query results look like.

Conclusion

We have show you how it is easy to convert Edgar XML to Snowflake, and then to analyse and query the data.

Our enterprise edition can be installed on a single node or for very large volumes of XML on a cluster of servers.

If you have any questions please refer to the Flexter FAQ section. You can also request a demo of Flexter or reach out to us directly with any questions.

Enjoyed this post? Have a look at the other posts on our blog.

Contact us for Snowflake professional services.

We created the content in partnership with Snowflake.

In this video, we use Flexter to automatically convert very complex FpML XML to Snowflake tables. Book a demo to see the power of Flexter in action!